Bringing Verified Web2 Data to Aztec with Primus zkTLS

Elena

Cryptography Engineer

Alex

Cryptography Engineer

HashCloak has been a highly reliable and technically strong partner to work with. Their team is responsive, detail-oriented, and consistently able to translate complex concepts into clear, practical deliverables.

Xiang Xie, CEO and Co-Founder of Primus Labs

The Aztec Network provides a powerful environment for privacy-friendly dApps, but there is no general mechanism to bring verified web2 data on-chain. We collaborated with Primus Labs to change this and unlock a new set of Aztec applications that use verified real-world inputs. We integrated Primus zkTLS, a protocol that uses Zero-Knowledge Proofs to verify TLS secured web data, via a generic attestation verifier for Aztec smart contracts. This post walks through the problem, our implementation and how to get started.

The Problem: No Verified Web2 Data on Aztec

Aztec Network currently has some built-in oracle capabilities designed for specific protocol-level functions, but there is no general-purpose mechanism for bringing authenticated external data onto its L2. Without a native way to verify such data directly on-chain, developers must rely on centralized intermediaries or external infrastructure. This increases trust assumptions, reduces composability, and constrains the range of applications possible on Aztec.

This is a significant gap, since many useful applications require trusted real-world inputs:

Market prices and financial data for DeFi protocol, DAO treasury management, or on-chain investor relations

KYC and credential attestations for compliance-driven applications

Supply chain and audit records for enterprise use cases

Any web2-originated data needed for privacy-preserving dApps

Primus zkTLS

Primus Labs provides the necessary infrastructure to solve this problem. zkTLS is a cryptographic protocol that uses Zero-Knowledge Proofs to prove authenticity of web data which has been transmitted using TLS. These "web proofs" convince us the data indeed came from a certain source, for example a bank, social media platform or trading platform. This enables any web data to be validated and provides a bridge between web2 and web3 while maintaining privacy for the users.

Primus' zkTLS technology can be used in two modes: MPC mode and the proxy mode. These two options offer different security settings and performance and both are supported in our final solution of zkTLS for Aztec. For MPC mode the Primus attestor and the client take part in a secure multi-party computation protocol in order to handle the TLS handshake. Alternatively, higher performance can be achieved if the attestor is used as a proxy between the user and the server, forwarding TLS data as a middle man. In this setting, no MPC is needed but it introduces a new security assumption. In both cases, the efficient and lightweight protocol QuickSilver is used, which was developed by the Primus team.

The zkTLS mode that makes our integration with Aztec powerful is called zkTLS DVC, Data Verification and Computation, which not only lets us authenticate the web data but also allows us to do verified computations on it. In the next section we'll dive further into how this works and how we incorporated this functionality for Aztec.

From TLS Traffic to Verifiable Attestations using Primus

Primus provides attestations over the full web data. These attestations contain the necessary information to check that the integrity and authenticity of the web data checks out, while maintaining privacy of said data. In addition, we can locally obtain the accompanying private data that will be used for additional checks or other computations in the Aztec App. The attestations greatly increase the feasibility for this by making it possible to send and verify only a portion of the actual payload instead of the full TLS data the webpage will provide.

We are working with DVC mode which aims to not only verify the data, but also allow any type of computation on the underlying private data in a privacy friendly manner. In the standard DVC workflow, this is achieved by doing attestation verification and the business logic checks in a zkVM inside a TEE. For this project we are replacing zkVM+TEE with the private execution in an Aztec smart contract.

So the first step is to use Primus Network to obtain an attestation of the web data you are interested in. In addition to the attestation itself, you are provided with a signature of this attestation and additional metadata that you can use for verifying the private data that will be used for computation. Then locally you can obtain the required plaintext (the private data) which will correspond to the attestation. To verify the attestation you need to do 3 things: verify the attestation signature, check the source URL is as you expected and verify the correctness of the response. We'll dive into what this last part means.

There are 2 types of attestations; hash-based and commitment-based. For the first type, for each queried plaintext message, a SHA256 hash is returned in the attestation data. So to verify the correctness, each plaintext message gets hashed and this hash is compared to received ones. For the commitment-based approach on the other hand, a plaintext message is divided into 253 bit parts and a commitment is returned to each of these pieces. In order to verify correctness here we receive the randomness used for each commitment, in addition to the plaintexts. Depending on your use-case you can pick either of the approaches when obtaining the attestations. Primus Labs says the following about the two options:

Hash-based attestation is well-suited for low-cost integrity anchoring, where users can generate private attestations off-chain and store the hashed attestation on-chain.

Commitment-based attestation is more appropriate for hide-and-reveal scenarios, such as voting or sealed bids, where the committed value will later be used in the zk circuits.

An example of how this could work in practice; use data from GitHub with profile information of a user. In the Aztec App we might want to check that the user has 10+ contributions to specific repo, a certain amount of repository stars or followers or is member of a specific organization. Using the attestation we only have to verify the source URL, the signature over the attestation and either a hash over or a commitment to the private data. Then we can perform the checks on the private data according to the business logic. Finally, any on-chain action on Aztec can be taken after verifying the web2 data, such as giving the user certain access, using the verified data in another transaction, store something in the contract after verification is complete or any other follow-up action that is needed for your use-case.

Details on obtaining the attestations

To obtain an attestation using the DVC mode, Primus has developed a demo that can be used as an example. Additionally, we published a tutorial on how to incorporate zkTLS into an Aztec App, which contains the full flow starting at obtaining an attestation. The important part to highlight from this is that we can specify the URL to query as well as the "parsePath" for the exact data you want to obtain. For the case of Aztec, it is good practice to make this query as specific as possible, such that the private data that is returned is as short as possible. If it is necessary to obtain multiple pieces of private data, they can be returned within the same attestation.

Specifically, a developer needs to define what URL to query, for example the contributors of a Github repo:

Then, the exact paths to query are specified in the responseResolve information. For example the username (login) and id of the first Github contributor (for all the details of what these values mean, we refer to the tutorial:

Alternatively, but not recommended, you could query a single path $.[0] that returns the full Github profile. Then in the Aztec private function (the circuit) this JSON data needs to be parsed before any checks can be done. As parsing within a circuit is very expensive, we don't recommend this approach. It will be more performant to obtain each separate piece of data as an object in the responseResolve to avoid in-circuit parsing and be able to do assertions directly.

Building the Attestation Verifier: Architecture and Design Decisions

To fully unlock the power of zkTLS on Aztec, we need to be able to verify the attestations in an Aztec smart contract. The goal of this project was to open up web2 data to any Aztec app via Primus zkTLS and thus the Aztec Attestation Verifier we built is designed to be generic. Developers will be able to use the SDK and libraries to incorporate it for any type of use-case. In this part we'll dive into how we achieved this, while in the next section we share more on how an Aztec developer can use it.

To verify the attestation there are 3 steps to take: verify a signature, check the URL is coming from a source that you allow and check the correctness of the private information w.r.t the attestation. And since all of this has to happen within an Aztec smart contract we have to take function input restrictions and smart contract restrictions into account. Our challenge was to make all the verifications work within the limits, while making this as developer friendly as possible. At the core of our solution is an Aztec smart contract template and a Noir library that provide the generic verification logic, specifically, the contract template uses the Noir library. Then there is a TypeScript parsing library that handles the conversion from the attestation JSON to the contract inputs. The Aztec Attestation SDK can be used to create the full workflow from deployment to sending the transaction for verification. Finally we provide a few examples of smart contracts with their calling scripts to see how the full Aztec side works.

Designing for Privacy: Circuit and Input Constraints on Aztec

The first important choice is that we're using a private function in the smart contract. This allows us to use the private data as input and make sure privacy is preserved when the computations or checks on the attestation data are done. For Aztec, private functions are translated to circuits and will be executed client-side in the Private Execution Environment (PXE). This execution generates proofs that are verified on-chain. A public function would not work for this use-case, because we are working with private data alongside the attestation data and this must, of course, stay private.

Since Aztec doesn't support contract inheritance, we opted to provide a smart contract template that developers can use to simply complete or extract the necessary parts from. They can also directly call the Noir library that contains the checks we'll describe now.

The next observation is that inputting the full attestation to the private function, parsing it and performing the checks is not the way to go, because it will blow up the circuit and it will most probably overflow the input limits for smart contract functions. Therefore, as input we take the separate elements we use for the checks:

attestor public key (

public_key_xandpublic_key_y)hash, hash of encoded attestation data, needed for verification of the signaturethe

signaturerequest_urls, where the data comes from (according to the attestation)allowed_urls, the set of currently allowed URLs, according to the dApp

In addition we have inputs for the private data verification, depending on the chosen algorithm. If the approach is hash-based, we need data_hashes and the private data contents. If we're going with commitment based data we need the commitments coms, the randomnesses used rnds, the private data msgs_chunks and the point H which is fixed for the application. This is library code where these inputs are used.

We didn't want developers to deal with having to extract and format all of those separate inputs, so they can either use the parsing library that takes care of the conversion. For an even smoother experience, the Aztec Attestation SDK can be used, which does parsing, deployment and contract calls (and calls the parsing library within the functionality).

Implementing the generic checks

Verifying the signature can be done easily using the existing std::ecdsa_secp256k1::verify_signature function from the standard library available on Aztec. The optimization here is to directly input the hash into the function, instead of computing the value within the circuit (the private function), which would be much more expensive.

For the next step, to verify whether the request URL(s) are among the allowed URLs for the dApp, we need a bit more strategy. Since we are in a private function, it is not possible to "query" public data from an Aztec smart contract directly, because the private functions are executed client-side and added on-chain later upon proof verification and transaction inclusion by the sequencers. However, the strategy is reversed: you input the public data to the private function, and at the end of the execution call a public function to check this public data was indeed correct against the contract storage. Check the Aztec docs for more details on this.

In this case we store hashes of the allowed URLs in the contract storage to optimize and make it possible to store several URLs. In the private function we check that the request URL(s) start with any of the allowed URLs, and return the hash of the allowed URL that matches. To do the substring check we use the noir_string_search library. For example, the dApp allows any request from https://x.com and the request URL of the attestation data equals https://x.com/settings/account. This will pass verification and the function returns the hash of https://x.com.

We must note that hashing the allowed URL adds some cost in the circuit, but this is a trade-off that is necessary in order to do a public storage check at all. If a URL should support a maximum length of 1024 bytes, this would be very inefficient to store in the contract storage (and at this point unsupported). In comparison, using the Poseidon hash the value can be stored in a single Field type.

For the final (generic) check, there are two options, based on whether you're inputting the commitment-based information or the hash-based data. For the first approach, we check for each plaintext message if the commitments for that message check out. Depending on the size of the message, there can be 1 or more commitments. For each array of commitments per plaintext message we receive a randomness and check that for all i we have:coms[i] == msg_chunk[i]*G+rnds[i]*H in embedded curve arithmetic. Here, G is the standard base point of the curve and H is a fixed generator point per application. The necessary operations from this come from the standard library once more std::embedded_curve_ops. For the hash-based approach we simply hash each private message contents[i] and check it is equal to the input data_hashes[i].

Making it business-case specific

Finally, when all the checks have been performed we need to do 2 things. First of all, we still need to do a "public check" that the allowed URL that matched the request URL is indeed present in the public storage of the contract. Secondly, you'll want to do some business logic check on the private data, which is ultimately the purpose of the attestation verification. Note however, that in practice these things happen in reverse order, since any public function call in a private Aztec function is executed when the sequencer runs it.

What makes the code use-case specific are the checks incorporated on the private data that was queried. In the contract template, this is indicated with // TODO insert checks on msgs here for the commitment verification and here for the hash-based verification. As explained in the section above on how to obtain the attestations from Primus, the aim is for this private data to be as small as possible, since this will prevent the need for parsing JSON data in the circuit. (However, if this is needed there is a json parser library for Noir that can be used, and we recommend our fork for easy use.)

An example of what might be checked; if the web data is coming from Binance and contains the balance for ETH, the application could check the value is > 0.1. We also added two example contracts with their callings scripts to the example folder.

A note on the use of Poseidon(2)

In the light of this recent new attack on Poseidon2, Ethereum announced "Poseidon2 is out, Poseidon1 is in" during the 8th Ethproofs call. Prior to this development, Poseidon2 was favoured over Poseidon1 because of the performance, especially in zk circuits.

For this project we are also using Poseidon2, specifically from the Noir library poseidon, for hashing the allowed URL. This is done when storing the hash in smart contract storage and at the end of attestation verification, we the matching allowed URL is hashed and returned. The size of the URL can be set by developers, but should be assumed to have a decent size. In the 2 examples we added the maximum array sizes are 96 and 128 respectively. Currently, the poseidon library supports a maximum size of 16 elements for Poseidon1, which does not cover the functionality. We expect this library to be updated soon and then we can swap Poseidon2 for Poseidon1.

Gatecounts

Since we are working with private functions in an Aztec smart contract and this is translated into a circuit in Noir, we can obtain the gatecount to analyze its performance. Additionally we have scripts to execute the verification transactions on a local network. This is extremely fast and does not give a good reference for how it will work with an external network. Previous versions of this library did testing on devnet as well, but devnet has recently been removed by Aztec. Therefore, we focus on the gatecounts for this section.

The number of gates can be generated per private smart contract function by profiling the function. The size of the circuit has been obtained for four examples of verification functions. We implemented 2 different use-cases, both with a hash-based attestation and a commitment-based attestation. For the Github example, the first alphabetical contributor of a repository is queried. Then, in the private function it is checked that the username and Github id is as expected. For the OKX example, the status of the trading pair BTC-USD is retrieved and it is checked this is equal to "live".

For both examples we should note that the aim was to keep the plaintext messages (in the private data part of the attestion) as small as possible. This allows us to do a check directly on that data rather than needing to parse the data first. The difference between the Github and the OKX example is that the first example queries 2 different pieces of private data and the second example only 1.

Method | Circuitsize |

|---|---|

GithubVerifier:verify_comm | 91,497 |

GithubVerifier:verify_hash | 104,486 |

OKXVerifier:verify_comm: | 73,950 |

OKXVerifier:verify_hash: | 81,378 |

As long as the plaintext messages are relatively small, the gatecount for commitment-based and hash-based approaches are relatively comparable. However, for longer plaintext messages, more commitments will be needed and more checks have to be performed; this scales linearly. But the real cost of longer messages will not be the increased number of commitment checks; it is the cost of larger inputsize itself and the need to parse that input (which is very probable).

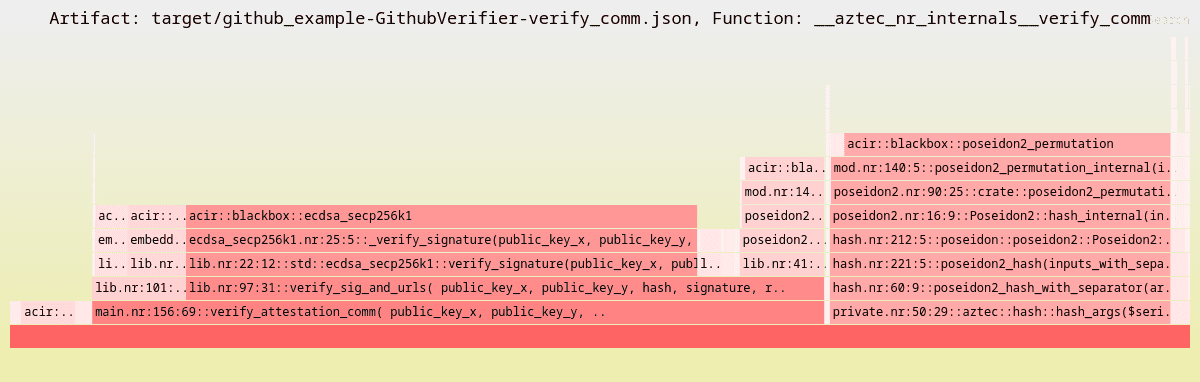

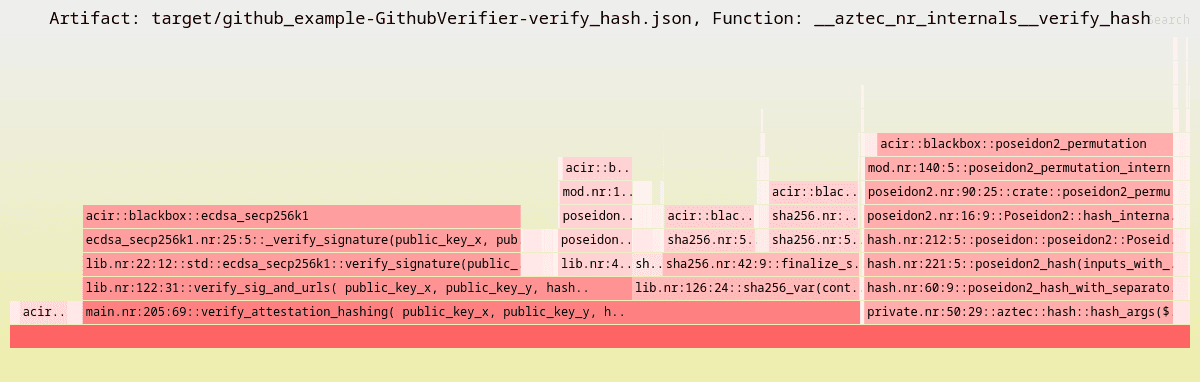

To get more insights into where the gate cost go for private functions, Aztec has the option to generate flamegraphs. The flamegraphs for the 4 example verification functions can be found here. The images below don't show all the details but we can see the two parts that make up for the majority of the gates: the verification of the attestation and something that is called hash_args. The latter is the cost for calling a public function at the end of a private function, which we do to verify the allowed URL (and in the case of commitment-based attestation also point H) against the public value in contract storage.

Within the attestation verification functionality, approximately 39k gates are used for the ECDSA signature verification and ~6.5k gates to hash the allowed URL in order to compare it to public storage. In this example, verifying the commitments costs 7.2k gates, versus checking the hashes with a cost of 20k gates. This makes up for the difference between the two approaches that we see in the table.

In this example where it is checked a contributor to a certain Github repository has the expected username and Github id, the actual "business logic" checks on the private data are very cheap: both cost 50 gates and barely show up in the flamegraphs.

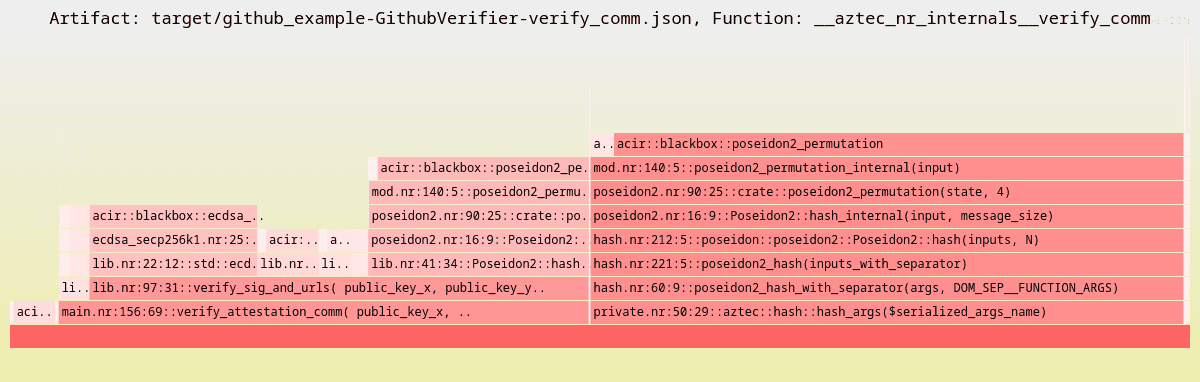

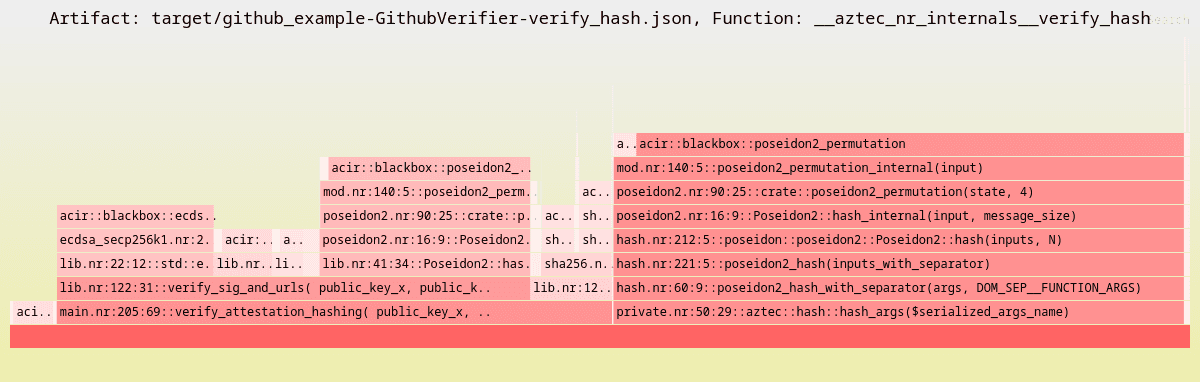

To show the cost of larger input sizes, independent from needing to parse them, below are the flamegraphs for the exact same examples as above, but with a much high limit on the maximum size of a request URL: 1024 bytes versus 128 bytes. For more details, these flamegraphs can be checked out here.

This increases the commitment-based verification to 279,273 gates and hash-based to 292,262 gates. The increased costs is visible in the verification of attestation and hash_args, but actually, in both cases it is caused by the need to hash larger inputs. In the first case, the cost of hashing allowed url (which is padded to the maximum length) increases to 51k gates instead of 6.5k. Where hash_args cost ~26k in the previous example, with this increased inputsize it costs almost 140k (!) gates.

In general, with an increase of 300% on the gatecount for both use-cases this shows it is essential to pick conservative lower bounds on all the inputs as well as try to use plaintext messages that are smaller rather than larger.

Developer Guide: Three Steps to Integrate zkTLS on Aztec

This post has laid out how we made Primus zkTLS usable on Aztec, but for Aztec developers it is not necessary to know all these implementation details. In this last section we'll briefly discuss how devs can actually use the artifacts we have built and hopefully create exciting new types of apps for us to use. We have a complete tutorial here.

Adding zkTLS to an Aztec app takes three steps:

Obtain a zkTLS attestation using Primus

Add verification to your Aztec contract

Parse and submit the attestation on-chain

For the first step it is the DVC (Data Verification and Computation) mode of Primus that is used to obtain the correct attestation. This allows us to obtain web data from any URL, whether publicly available or behind an API that you access with a token. The result is an attestation JSON file that can be saved locally in order to use it in the next steps.

For a new Aztec project, developers can use our contract template to get started with the outline necessary to verify an attestation. Otherwise, the necessary elements can be added to the existing contract. This will consist of adding the verification function, with the custom checks on the private data incorporated, and adding the allowed URLs to contract storage. The developer can pick between hash-based or commitment-based attestations, or support them both. If the commitment-based approach is chosen, it is necessary for the dApp to pick a public point H that will be used widely and stored in contract storage as well. Note that currently there is a fixed H value used on the Primus side, which is defined here, however, this will be adjustable via the zkTLS API in the future and therefore should be in contract storage.

Finally, for step 3 all the pieces are connected together. The attestation JSON content needs to make its way to the Aztec contract that can do the verification. As explained before, it is undesirable to send the full content as is, because this would be detrimental to the performance. Instead, the right parts need to be extracted or hashed and sent as input. And to make this final step easy we provide the Aztec Attestation SDK, or for more low-level functionality the developer can also use the Attestation Parsing library.

Conclusion

This is the first step towards making web2 data a first-class citizen on Aztec, enabled by the DVC mode for zkTLS by Primus. As the Aztec ecosystem matures and performance improves, we expect applications to be able to use this building block for a wide range of use-cases. Everything we built is open source and can be found here.

HashCloak specializes in enabling teams with the expertise to build privacy infrastructure leveraging the state of the art in advanced cryptography.